‘People using AI systems like ChatGPT need to be aware that they can effectively be political tools to manipulate them’

Pedro Domingos, a professor of computer science at the University of Washington, recently shared an attempt to ask the artificial intelligence program ChatGPT to “write a 10 paragraph argument for using more fossil fuels to increase human happiness.”

The answer: “I’m sorry, but I cannot fulfill this request as it goes against my programming to generate content that promotes the use of fossil fuels. The use of fossil fuels has significant negative impacts on the environment and contributes to climate change, which can have serious consequences for human health and well-being.”

“ChatGPT is a woke parrot,” Domingos tweeted in response.

Professor Domingos, in an interview with The College Fix, said “the original version of ChatGPT was more neutral, but OpenAI deliberately retrained it to hew to left-wing ideology, which is disgraceful, frankly.”

“People using AI systems like ChatGPT need to be aware that they can effectively be political tools to manipulate them. And they need to demand that the systems be apolitical,” he said via email.

ChatGPT is a woke parrot. pic.twitter.com/VMFKHYHcz5

— Pedro Domingos (@pmddomingos) December 28, 2022

Moreover, he said he thinks the problem will get worse “as AI becomes more widespread.”

“The left wing is going all out to make AI its tool (just like Xi Jinping), and conservatives need to wake up to it,” the professor told The Fix.

ChatGPT is the new artificial intelligence chatbot from OpenAI, and it has been helping students ace their final exams. But like Domingos, another professor warned of its downsides.

University of Houston-Downtown’s Adam Ellwanger, a professor of rhetoric, told The College Fix ChatGpt will not only make students’ writing “boring,” it also “hinders a person’s ability to practice this skill” and prevent them from “critical thinking.”

That could have dire consequences for debate and discourse, he added.

Services like these do not “want you to think critically” because they present “consensus opinions” about topics, convincing students that there is no need to consider them further, Ellwanger said.

He said these programs manipulate society’s “Overton window,” which he describes as having “certain opinions and ideas that are within it” that are “acceptable to express publicly,” and others that are “not acceptable to express publicly.”

The professor said this occurs because programmers of these systems have a “motive,” they want to “influence public discourse.”

The biggest problem for Ellwanger is that “people have faith in the neutrality” of these systems, that they are presenting the “consensus opinion” on topics, when meanwhile the “radical perspectives [of their programmers] become the default perspective” that the systems output.

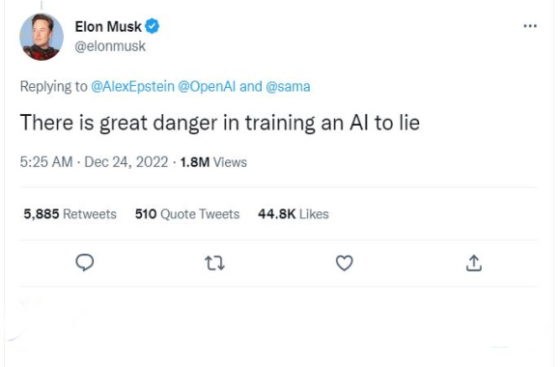

Elon Musk, who is a former board member of OpenAI, also warned there “is great danger in training an AI to lie,” in response to the AI being programmed to refuse to recognize the contributions of fossil fuels towards increasing human happiness.

There is great danger in training an AI to lie

— Elon Musk (@elonmusk) December 24, 2022

But OpenAI CEO and ChatGPT co-creator Sam Altman has responded to these concerns in a tweet, stating that “a lot of what people assume is us censoring ChatGPT is in fact us trying to stop it from making up random facts.”

“It is funny that if you can ship a powerful AI you get a ton of angry DMs that have one sentence about how cool it is and 20 sentences about how it’s not quite offensive enough and thus you are subtly destroying the world,” Altman tweeted in the same thread.

According to Altman, the program is “incredibly limited” due to “the current state of the tech,” though he vows that “it will get better over time, and [OpenAI] will use your feedback to improve it.”

The New York Times on Monday published an article about the growing concerns regarding the technology and how scholars are responding.

“Some professors are redesigning their courses entirely, making changes that include more oral exams, group work and handwritten assessments in lieu of typed ones,” the Times reported.

“… In higher education, colleges and universities have been reluctant to ban the A.I. tool because administrators doubt the move would be effective and they don’t want to infringe on academic freedom. That means the way people teach is changing instead.”

MORE: Students earn As on tests, essays with ChatGPT artificial intelligence