Or robots are mostly white because they fit the ‘home decor’

Maybe robotic vacuums should come with an implicit-bias instructional video.

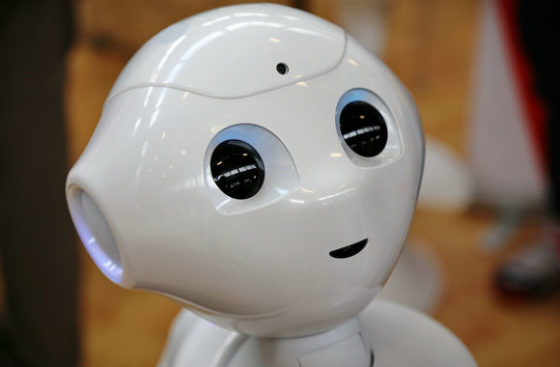

An international group of university researchers has found that humans perceive the color of “anthropomorphic” robots the same way they perceive race in humans.

Hence “the same race-related prejudices that humans experience extend to robots,” the Institute of Electrical and Electronics Engineers magazine Spectrum reports.

Just like California Polytechnic State University-San Luis Obispo and its problem with too many white students, and Harvard’s efforts to keep down its Asian-American population, the robot industry has a racial diversity problem.

Lead author Christoph Bartneck, a professor in the Human Interface Technology Lab at the University of Canterbury in New Zealand, says “humanoid” robots are almost entirely “white or Asian,” and the exceptions are typically modeled after specific individuals:

Today racism is still part of our reality and the Black Lives Matter movement demonstrates this with utmost urgency. At the same time, we are about to introduce social robots, that is, robots that are designed to interact with humans, into our society. These robots will take on the roles of caretakers, educators, and companions. …

This lack of racial diversity among social robots may be anticipated to produce all of the problematic outcomes associated with a lack of racial diversity in other fields. … If robots are supposed to function as teachers, friends, or caretakers, for instance, then it will be a serious problem if all of these roles are only ever occupied by robots that are racialized as white.

MORE: Internal docs show anti-Asian tactics in Harvard admissions

Bartneck admits that there’s no obvious racial animus motivating the “racially diverse community of engineers” to design white robots:

But our implicit measures demonstrate that people do racialize the robots and that they adapt their behavior accordingly. The participants in our studies showed a racial bias towards robots.

There’s another explanation provided by an earlier Spectrum article from last year’s Consumer Electronics Show: “Social home robots” that are white fit the home decor better.

The research was conducted using the “shooter bias task.” This seeks to measure “automatic prejudice” toward white versus black men when shown photos for a split second and asked to judge whether they are holding a gun or a “benign object.”

Bartneck said the paper went through “an unparalleled review process,” with nine reviewers and accusations of “sensationalism and tokenism,” though the methods and statistics of the paper were “never in doubt.”

MORE: Cal Poly works to reduce number of white students

When it came time to present it at the ACM/IEEE International Conference on Human Robot Interaction in March, conference organizers barred even a “small panel discussion” on the paper and told Bartneck to present it “without any commentary,” he claims:

All attempts to have an open discussion at the conference about the results of our paper were turned down. … Why would you expose yourself to such harsh and ideology-driven criticism?

The researchers are already on to the next facet of their study: how much bias we have against robots with “several shades of brown.”

Bartneck’s research collaborators came from Guizhou University of Engineering Science, Monash University in Australia and the University of Bielefeld in Germany.

Read the article and paper.

MORE: Professors say AI can be ‘sexist and racist’

IMAGE: MikeDotta/Shutterstock